The artificial intelligence landscape in March 2026 has reached a definitive turning point. As organizations and individual professionals seek to integrate more autonomous agents into their daily operations, the rivalry between OpenAI and Anthropic has intensified to unprecedented levels. With the recent surprise release of GPT-5.4 and the steady dominance of Claude Opus 4.6, the question on everyone’s mind is clear: In the battle of GPT-5 vs Claude 4, which model truly dominates the industry?

In this comprehensive comparison, we will dive deep into the benchmarks, coding capabilities, and reasoning power of these two titans. Whether you are a software engineer, a creative marketer, or looking for ways to make money online 2026, choosing the right AI partner is essential for maintaining a competitive edge in this rapidly evolving digital economy.

[rank_math_toc]

🧠 Reasoning and Intelligence: The Rise of “Thinking Mode”

One of the most significant shifts in 2026 is the introduction of dedicated reasoning layers. OpenAI’s GPT-5.4 has introduced a revamped “Thinking Mode” that allows the model to pause, verify its logic, and show an upfront plan of its reasoning process before generating a final answer. This “System 2” thinking has resulted in a staggering 91% score on the MMLU benchmark, up from GPT-4o’s 86%.

In the other corner, Anthropic’s Claude Opus 4.6 uses a sophisticated “Adaptive Thinking” system. Unlike GPT’s manual settings, Claude can automatically judge the complexity of a problem and dynamically allocate the necessary reasoning depth. This allows Claude to excel in “Humanity’s Last Exam,” a complex multidisciplinary test where it currently leads GPT-5.4 by nearly 13.3%.

💻 Coding and Development: A High-Stakes Duel

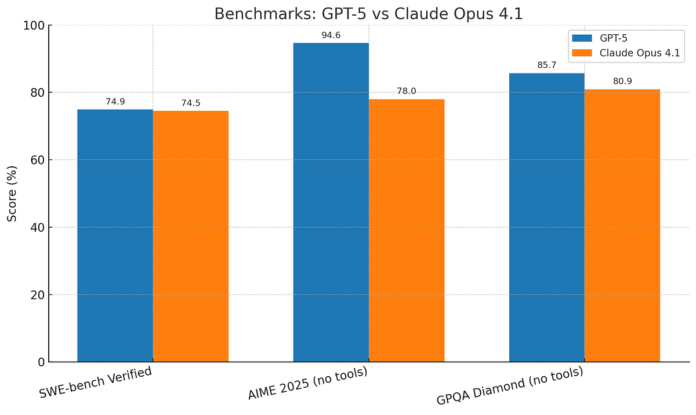

For developers managing complex web applications , the choice between GPT-5 vs Claude 4 often comes down to specific coding benchmarks.

- Claude 4 for Architecture: Claude Opus 4.6 remains the “coding champ” for large-scale refactoring. Its 80.8% score on SWE-bench Verified (a test of real-world GitHub issue resolution) is the highest in the industry. It plans more carefully, sustains agentic tasks for longer, and produces significantly cleaner, more “Pythonic” code.

- GPT-5.4 for Speed and Automation: GPT-5.4 has shown massive improvements in high-difficulty tasks, scoring 57.7% on SWE-bench Pro. It is arguably faster at rapid prototyping and excels in terminal-based operations (75.1% on Terminal-Bench), making it a favorite for DevOps engineers and system administrators.

📁 Context Window: The Million-Token War

The ability to process massive datasets in one go is a crucial feature for enterprise-level AI. In 2026, both models have officially entered the Million-Token Class.

- GPT-5.4’s Recall: OpenAI officially supports a 1,050,000-token context window. Its “Perfect Recall” technology ensures that information buried in the middle of a document isn’t lost—a common flaw in earlier generations. This makes it ideal for analyzing entire legal libraries or customer databases.

- Claude 4’s Compaction: While Claude also supports a 1M token context (in beta), Anthropic focuses on Context Compaction. This feature automatically summarizes and replaces older parts of the conversation, allowing Claude to perform extremely long-running autonomous tasks without hitting memory limits.

🤖 Agentic Capabilities and Computer Use

The year 2026 marks the era of Agentic AI, where models don’t just talk—they do.

- OpenAI’s Native Control: GPT-5.4 has built-in Native Computer Control, allowing it to operate software, browse the web, and execute multi-step processes across your desktop. Its OSWorld score of 75.0% has finally surpassed the human baseline, making it the superior choice for automating office workflows, spreadsheets, and presentations.

- Anthropic’s Agent Teams: Claude 4.6 introduces Agent Teams, where you can assemble multiple specialized sub-agents to work on a task together. This “swarm” intelligence is perfect for complex software engineering where one agent writes code while another reviews and a third runs tests.

- 🛡️ Ethics, Safety, and User Backlash

A significant factor in the GPT-5 vs Claude 4 debate this month is public perception. OpenAI recently faced backlash following a controversial agreement with the US Department of Defense, leading to a surge in ChatGPT uninstalls.

In contrast, Anthropic has capitalized on its “Constitutional AI” approach, refusing military partnerships. This has led to Claude reaching No. 1 on the US App Store for the first time in March 2026. For businesses in highly regulated sectors like healthcare and finance, Claude’s safety-first alignment remains a major selling point.

💰 Pricing: Who Offers Better Value?

Cost-efficiency is vital for scaling AI operations. In 2026, OpenAI has adopted an aggressive pricing strategy:

| Metric | GPT-5.4 (Standard) | Claude Opus 4.6 |

| Input Price (per 1M tokens) | $2.50 | $5.00 |

| Output Price (per 1M tokens) | $15.00 | $25.00 |

| Cached Input Savings | 90% | 90% |

| Best For | Volume & Automation | Accuracy & Architecture |

GPT-5.4 is nearly 40-50% cheaper than Claude Opus 4.6, making it the better choice for high-volume data processing and budget-conscious startups.

Final Verdict: Which Model Dominates?

The winner of the GPT-5 vs Claude 4 battle depends on your professional requirements:

- Choose GPT-5.4 if: You need an all-around workhorse for office automation, native computer use, and cost-efficient processing of massive context windows. It is the king of general knowledge work and spreadsheet integration.

- Choose Claude 4 if: Your highest-value work is software engineering, deep architectural reasoning, or long-context document analysis. Its superior prose and higher safety standards make it the “premium” choice for technical specialists.

The smartest strategy for 2026 is a hybrid approach: Use Claude for the initial complex reasoning and architecture, and use GPT-5.4 (or its more efficient variant, GPT-5.3 Instant) for high-volume execution and automation.